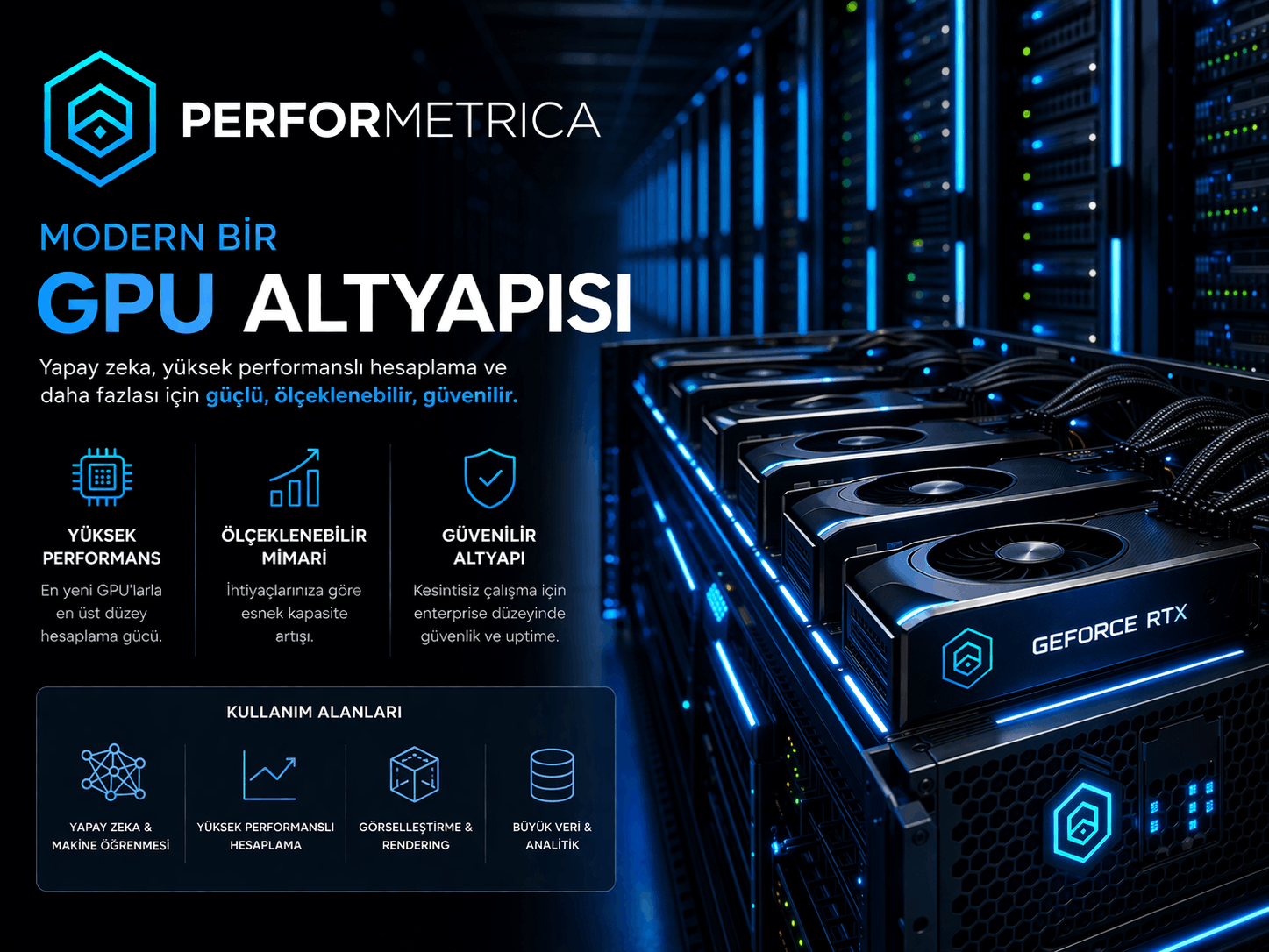

AI Infrastructure

A practical, production-oriented design approach for GPU infrastructure and LLM model runtime environments.

Architecture Stages and Infrastructure Design

Deployment and Implementation Flow

Mapping data and model flow

Workloads are analyzed and CPU, GPU, memory, network and storage needs are identified. A foundation for system sizing is created.

Selecting the GPU platform and storage tier

Cluster architecture, node types, network topology and storage layers are designed. Capacity plans and growth scenarios are defined.

Framework / container / registry planning

The platform is deployed, validated and tuned with the required software environment and framework stack.

Pilot cluster and capacity decision

Pilot results, scalability needs and operational targets are evaluated to finalize the production direction.

Architectural Approach and System Design

Model development, fine-tuning and inference layers should not be forced into the same hardware pattern. Cost and usage patterns need to be handled separately.

NVLink/NVSwitch, power budget, rack cooling and the data tier are designed according to model size and iteration cadence.

Data management, security and container-based runtime environments are configured for multi-user operation.

Technical Deliverables and System Benefits

GPU platform design

GPU server architecture, networking, storage layer and capacity plan are delivered as a technical design document.

AI software environment

A tested platform with CUDA, cuDNN, container environment and AI frameworks installed is delivered ready for use.

AI operations model

User access, data management, model development, monitoring and resource planning processes are defined.